This blog post accompanies our latest ebook: 15 A/B testing ideas for ecommerce email marketers. Click here to download. |

It’s 2017, and the days of low-quality, irrelevant marketing messages are well and truly over; if your email doesn’t seem interesting to a recipient, it will be deleted, unsubscribed from or – dare we say it – marked as spam ( ).

As a result, it’s critical that your email marketing is crafted in such a way that it actually resonates with your subscriber list on a personal level.

The only way to know how your email marketing is *really* performing right now is to look at the numbers. The numbers don’t lie. If they are good, that’s great, but if they’re not so good, changes are probably needed (for some context, the average open rate for ecommerce email is 16.75% and the average click rate is 2.32%).

Fortunately, A/B testing can help you go about making those changes.

Also known as split testing, A/B testing refers to the method of creating and delivering different versions of an email to different portions of your subscriber base to observe which variation performs best. A test will usually include just one variable (the part of the email that is different for each version), and one metric that will be used to measure success.

In this blog post, we’ll explore seven aspects of email marketing that every ecommerce marketer should be testing – as well as some ideas for how to test them. (For A/B testing best practice and advice on how to create great split testing hypotheses, download our ebook here.)

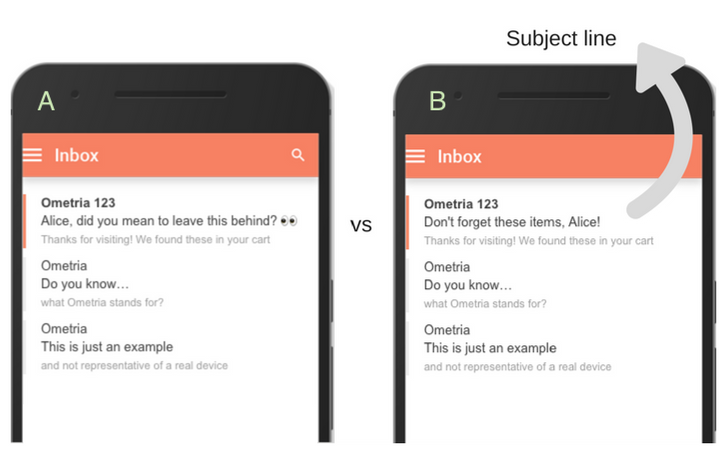

A/B testing a subject line can help a brand’s marketing success in both the short-term and the long-term; for example, it can be used to determine which of two versions works best for an isolated campaign, or as a way to detect subtle patterns/trends that emerge over time.

Testing ideas:

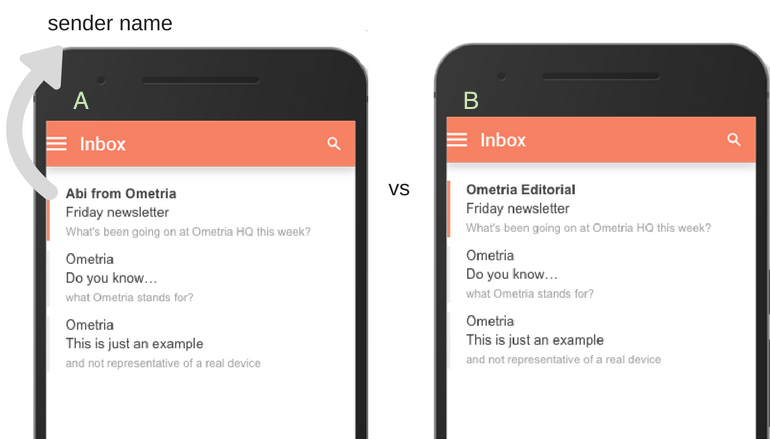

Who should you say your email is from? Would a first name work best? Or would it be more professional to either use first and last name or the name of your brand?

It may be that different formats lend themselves better to different types of email; for example, a customer service email may want a completely different vibe to a fun newsletter.

Testing ideas:

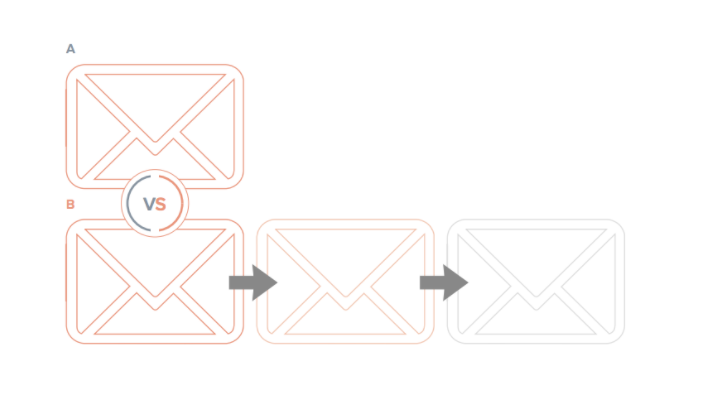

Does a one-off welcome email convert new subscribers to first-time buyers better than a multi-stage welcome series? What’s the optimal number of times you can remind a cart abandoner of what’s left in their cart before they tune out? You can use split testing to determine the number of emails that should be in an automated campaign.

You may want to test:

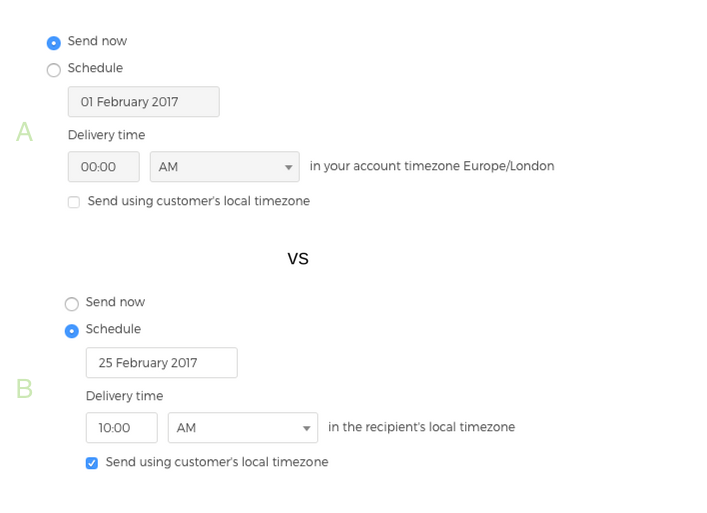

A/B test the send time of your emails to discover whether there’s a certain day and/or time your recipients are most likely to engage.

Testing ideas:

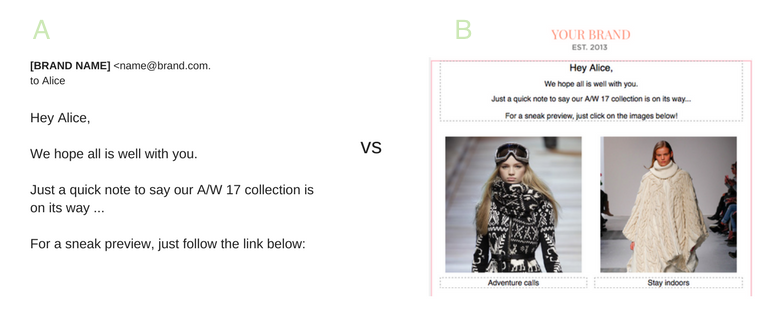

In an industry increasingly centred on the “visual” and what “looks good”, your email’s hero image is likely to influence whether or not recipients’ hit “delete” or “shop now”.

Testing ideas:

Other things you could experiment with here include user-generated content and/ or social influencer marketing, where you use authentic images opposed to photo shoots.

Despite the fact that, today, thanks to gifs, emojis, Instagram posts, selfies, Snapchat (the list goes on…), images are a huge part of digital communication, this doesn’t detract from the fact that – in some circumstances – text-only remains best.

For example, certain automated emails – such as welcome emails, or messages sent just to your VIP customers – can sometimes work better as text-only to give the illusion it’s been personally (and manually) sent to them by a member of your team.

Testing ideas:

To discount or not to discount, that is the million dollar question.

From 10% off in a welcome email to free international delivery when you spend over £40, for some brands incentives are a fundamental part of an email marketing strategy… but do they work for you? And if they do, which sort?

These are questions that can be answered via A/B testing; for example:

Testing ideas:

The incentive itself: free returns vs free shipping

The presentation: % off vs £ off

Free product vs free experiment (such as a holiday)

This blog post is just a taste of what you can do with A/B testing in ecommerce; for more testing ideas, as well as professional advice on things to bear in mind when running your tests, download our ebook on the subject here.

Whilst it’s likely that, in the future, artificial intelligence will result in marketers spending less time checking in on campaign performance (and making small tweaks), and less time manually setting up A/B tests, for now this marketing experiment continues to be extremely powerful.

Ometria is committed to protecting and respecting your privacy, and we’ll only use your personal information to administer your account and to provide the products and services you requested from us. You may unsubscribe from these communications at any time. For information on how to unsubscribe, as well as our privacy practices and commitment to protecting your privacy, please review our Privacy Policy.

Take the first step toward smarter customer marketing